随着数据规模的不断膨胀,使用多节点集群的分布式方式逐渐成为趋势。在这种情况下,如何高效、自动化管理集群节点,实现不同节点的协同工作,配置一致性,状态一致性,高可用性,可观测性等,就成为一个重要的挑战。

集群管理的复杂性体现在,一方面我们需要把所有的节点,不管是底层数据库节点,还是中间件或者业务系统节点的状态都统一管理起来,并且能实时探测到最新的配置变动情况,进一步为集群的调控和调度提供依据。

另一方面,不同节点之间的统一协调,分库分表策略以及规则同步,也需要我们能够设计一套在分布式情况下,进行全局事件通知机制以及独占性操作的分布式协调锁机制。在这方面,ShardingJDBC采用了Zookeeper/Etcd来实现配置的同步,状态变更通知,以及分布式锁来控制排他操作。

ShardingJDBC分布式治理

ShardingJDBC集成了Zookeeper/Etcd,用来实现ShardingJDBC的分布式治理,下面我们先通过一个应用程序来演示一下实现原理。

安装Zookeeper

Sharding-JDBC集成Zookeeper

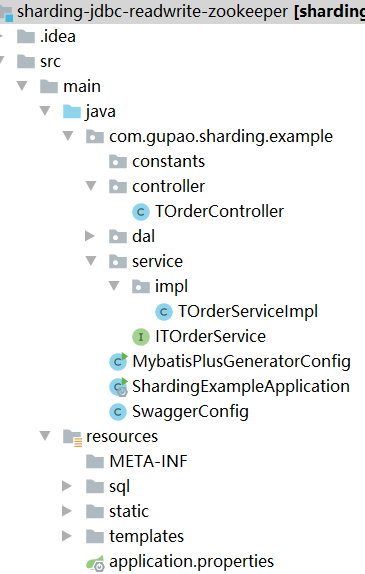

本阶段演示的项目代码:sharding-jdbc-split-zookeeper,项目结构如图9-1所示。

图9-1 项目结构

添加jar包依赖

引入jar包依赖(只需要依赖下面两个包即可)

<dependency>

<groupId>org.apache.shardingsphere</groupId>

<artifactId>shardingsphere-governance-repository-zookeeper-curator</artifactId>

<version>5.0.0-alpha</version>

</dependency>

<dependency>

<groupId>org.apache.shardingsphere</groupId>

<artifactId>shardingsphere-jdbc-governance-spring-boot-starter</artifactId>

<version>5.0.0-alpha</version>

<exclusions>

<exclusion>

<groupId>org.apache.shardingsphere</groupId>

<artifactId>shardingsphere-test</artifactId>

</exclusion>

</exclusions>

</dependency>

|

其他基础jar包(所有项目都是基于spring boot集成mybatis拷贝的)

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<scope>runtime</scope>

</dependency>

<dependency>

<groupId>com.baomidou</groupId>

<artifactId>mybatis-plus-boot-starter</artifactId>

<version>3.4.3</version>

</dependency>

<dependency>

<groupId>com.baomidou</groupId>

<artifactId>mybatis-plus-generator</artifactId>

<version>3.4.1</version>

</dependency>

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>1.2.72</version>

</dependency>

<dependency>

<groupId>org.apache.commons</groupId>

<artifactId>commons-lang3</artifactId>

<version>3.9</version>

</dependency>

<dependency>

<groupId>org.projectlombok</groupId>

<artifactId>lombok</artifactId>

<version>1.18.12</version>

</dependency>

|

添加配置文件

增加基础的分库分表配置-application.properties

spring.shardingsphere.datasource.names=ds-0,ds-1

spring.shardingsphere.datasource.common.type=com.zaxxer.hikari.HikariDataSource

spring.shardingsphere.datasource.common.driver-class-name=com.mysql.jdbc.Driver

spring.shardingsphere.datasource.ds-0.username=root

spring.shardingsphere.datasource.ds-0.password=123456

spring.shardingsphere.datasource.ds-0.jdbc-url=jdbc:mysql://192.168.221.128:3306/shard01?serverTimezone=UTC&useSSL=false&useUnicode=true&characterEncoding=UTF-8

spring.shardingsphere.datasource.ds-1.username=root

spring.shardingsphere.datasource.ds-1.password=123456

spring.shardingsphere.datasource.ds-1.jdbc-url=jdbc:mysql://192.168.221.128:3306/shard02?serverTimezone=UTC&useSSL=false&useUnicode=true&characterEncoding=UTF-8

spring.shardingsphere.rules.sharding.default-database-strategy.standard.sharding-column=user_id

spring.shardingsphere.rules.sharding.default-database-strategy.standard.sharding-algorithm-name=database-inline

spring.shardingsphere.rules.sharding.tables.t_order.actual-data-nodes=ds-$->{0..1}.t_order_$->{0..1}

spring.shardingsphere.rules.sharding.tables.t_order.table-strategy.standard.sharding-column=order_id

spring.shardingsphere.rules.sharding.tables.t_order.table-strategy.standard.sharding-algorithm-name=t-order-inline

spring.shardingsphere.rules.sharding.tables.t_order.key-generate-strategy.column=order_id

spring.shardingsphere.rules.sharding.tables.t_order.key-generate-strategy.key-generator-name=snowflake

spring.shardingsphere.rules.sharding.sharding-algorithms.database-inline.type=INLINE

spring.shardingsphere.rules.sharding.sharding-algorithms.database-inline.props.algorithm-expression=ds-$->{user_id % 2}

spring.shardingsphere.rules.sharding.sharding-algorithms.t-order-inline.type=INLINE

spring.shardingsphere.rules.sharding.sharding-algorithms.t-order-inline.props.algorithm-expression=t_order_$->{order_id % 2}

spring.shardingsphere.rules.sharding.sharding-algorithms.t-order-item-inline.type=INLINE

spring.shardingsphere.rules.sharding.sharding-algorithms.t-order-item-inline.props.algorithm-expression=t_order_item_$->{order_id % 2}

spring.shardingsphere.rules.sharding.key-generators.snowflake.type=SNOWFLAKE

spring.shardingsphere.rules.sharding.key-generators.snowflake.props.worker-id=123

|

增加Zookeeper配置

spring.shardingsphere.governance.name=sharding-jdbc-split-zookeeper

spring.shardingsphere.governance.overwrite=true

spring.shardingsphere.governance.registry-center.type=ZooKeeper

spring.shardingsphere.governance.registry-center.server-lists=192.168.221.131:2181

spring.shardingsphere.governance.registry-center.props.maxRetries=4

spring.shardingsphere.governance.registry-center.props.retryIntervalMilliseconds=6000

|

启动项目进行测试

启动过程中看到如下日志,表示配置zookeeper成功,启动的时候会先把本地配置保存到zookeeper中,后续我们可以在zookeeper中修改相关配置,然后同步通知给到相关的应用节点。

2021-07-29 21:31:25.007 INFO 112916 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:java.io.tmpdir=C:\Users\mayn\AppData\Local\Temp\

2021-07-29 21:31:25.007 INFO 112916 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:java.compiler=<NA>

2021-07-29 21:31:25.007 INFO 112916 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:os.name=Windows 10

2021-07-29 21:31:25.007 INFO 112916 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:os.arch=amd64

2021-07-29 21:31:25.007 INFO 112916 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:os.version=10.0

2021-07-29 21:31:25.007 INFO 112916 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:user.name=mayn

2021-07-29 21:31:25.007 INFO 112916 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:user.home=C:\Users\mayn

2021-07-29 21:31:25.007 INFO 112916 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:user.dir=E:\教研-课件\vip课程\第五轮\03 高并发组件\09 ShardingSphere基于Zookeeper实现分布式治理\sharding-jdbc-readwrite-zookeeper

2021-07-29 21:31:25.007 INFO 112916 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:os.memory.free=482MB

2021-07-29 21:31:25.007 INFO 112916 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:os.memory.max=7264MB

2021-07-29 21:31:25.007 INFO 112916 --- [ main] org.apache.zookeeper.ZooKeeper : Client environment:os.memory.total=501MB

2021-07-29 21:31:25.009 INFO 112916 --- [ main] org.apache.zookeeper.ZooKeeper : Initiating client connection, connectString=192.168.221.131:2181 sessionTimeout=60000 watcher=org.apache.curator.ConnectionState@68e2d03e

2021-07-29 21:31:25.012 INFO 112916 --- [ main] org.apache.zookeeper.common.X509Util : Setting -D jdk.tls.rejectClientInitiatedRenegotiation=true to disable client-initiated TLS renegotiation

2021-07-29 21:31:25.020 INFO 112916 --- [ main] org.apache.zookeeper.ClientCnxnSocket : jute.maxbuffer value is 1048575 Bytes

2021-07-29 21:31:25.023 INFO 112916 --- [ main] org.apache.zookeeper.ClientCnxn : zookeeper.request.timeout value is 0. feature enabled=false

2021-07-29 21:31:25.030 INFO 112916 --- [ main] o.a.c.f.imps.CuratorFrameworkImpl : Default schema

|

接着访问如下接口进行测试。

@RestController

@RequestMapping("/t-order")

public class TOrderController {

@Autowired

ITOrderService orderService;

@GetMapping

public void init() throws SQLException {

orderService.initEnvironment();

orderService.processSuccess();

}

}

|

配置中心的数据结构说明

注册中心的数据结构如下

namespace: 就是spring.shardingsphere.governance.name

namespace

├──users # 权限配置

├──props # 属性配置

├──schemas # Schema 配置

├ ├──${schema_1} # Schema 名称1

├ ├ ├──datasource # 数据源配置

├ ├ ├──rule # 规则配置

├ ├ ├──table # 表结构配置

├ ├──${schema_2} # Schema 名称2

├ ├ ├──datasource # 数据源配置

├ ├ ├──rule # 规则配置

├ ├ ├──table # 表结构配置

|

rules全局配置规则

可包括访问 ShardingSphere-Proxy 用户名和密码的权限配置

- !AUTHORITYusers: - root@%:root - sharding@127.0.0.1:shardingprovider: type: NATIVE

|

props属性配置

ShardingSphere相关属性配置

executor-size: 20sql-show: true

|

/schemas/${schemeName}/dataSources

多个数据库连接池的集合,不同数据库连接池属性自适配(例如:DBCP,C3P0,Druid, HikariCP)。

ds_0: dataSourceClassName: com.zaxxer.hikari.HikariDataSource props: url: jdbc:mysql://127.0.0.1:3306/demo_ds_0?serverTimezone=UTC&useSSL=false password: null maxPoolSize: 50 connectionTimeoutMilliseconds: 30000 idleTimeoutMilliseconds: 60000 minPoolSize: 1 username: root maxLifetimeMilliseconds: 1800000ds_1: dataSourceClassName: com.zaxxer.hikari.HikariDataSource props: url: jdbc:mysql://127.0.0.1:3306/demo_ds_1?serverTimezone=UTC&useSSL=false password: null maxPoolSize: 50 connectionTimeoutMilliseconds: 30000 idleTimeoutMilliseconds: 60000 minPoolSize: 1 username: root maxLifetimeMilliseconds: 1800000

|

/schemas/${schemeName}/rule

规则配置,可包括数据分片、读写分离等配置规则

rules:- !SHARDING defaultDatabaseStrategy: standard: shardingAlgorithmName: database-inline shardingColumn: user_id keyGenerators: snowflake: props: worker-id: '123' type: SNOWFLAKE shardingAlgorithms: t-order-inline: props: algorithm-expression: t_order_$->{order_id % 2} type: INLINE database-inline: props: algorithm-expression: ds-$->{user_id % 2} type: INLINE t-order-item-inline: props: algorithm-expression: t_order_item_$->{order_id % 2} type: INLINE tables: t_order: actualDataNodes: ds-$->{0..1}.t_order_$->{0..1} keyGenerateStrategy: column: order_id keyGeneratorName: snowflake logicTable: t_order tableStrategy: standard: shardingAlgorithmName: t-order-inline shardingColumn: order_id

|

/schemas/${schemeName}/table

表结构配置,暂时不支持动态修改

configuredSchemaMetaData: tables: t_order: columns: order_id: caseSensitive: false dataType: 0 generated: true name: order_id primaryKey: true user_id: caseSensitive: false dataType: 0 generated: false name: user_id primaryKey: false address_id: caseSensitive: false dataType: 0 generated: false name: address_id primaryKey: false status: caseSensitive: false dataType: 0 generated: false name: status primaryKey: falseunconfiguredSchemaMetaDataMap: ds-0: - t_order_complex - t_order_interval - t_order_item_complex

|

动态生效

除了table相关的配置无法动态更改之外,其他配置在zookeeper上修改之后,在不重启应用节点时,都会同步到相关服务节点。

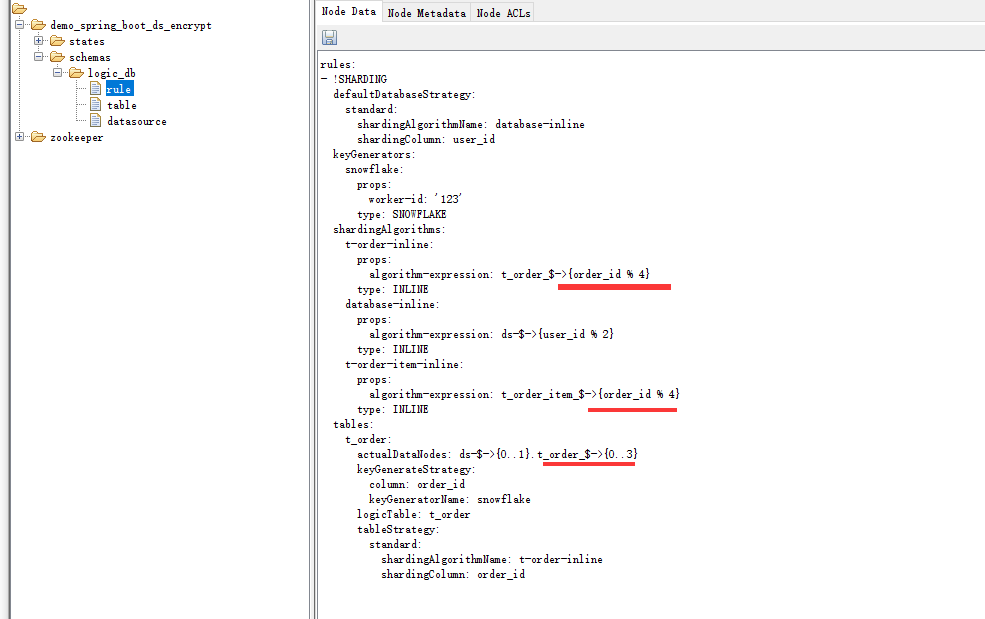

比如,我们修改图9-2所示的红色部分的位置,把t_order_$->{0..1}修改成t_order_$->{0..4},这样就会生成4个分片,并且取模规则也做相应更改。

然后点击保存后,在不重启应用节点时,重新发起接口测试请求,就可以看到修改成功后的结果。

http://localhost:8080/swagger-ui.html

图9-2 zookeeper配置中心

图9-2 zookeeper配置中心

注册中心节点

在zookeeper服务器上,还存在以下节点信息。

namespace ├──states ├ ├──proxynodes ├ ├ ├──${your_instance_ip_a}@${your_instance_pid_x}@${UUID} ├ ├ ├──${your_instance_ip_b}@${your_instance_pid_y}@${UUID} ├ ├ ├──.... ├ ├──datanodes ├ ├ ├──${schema_1} ├ ├ ├ ├──${ds_0} ├ ├ ├ ├──${ds_1} ├ ├ ├──${schema_2} ├ ├ ├ ├──${ds_0} ├ ├ ├ ├──${ds_1} ├ ├ ├──....

|

这个是注册中心节点,用来保存shardingsphere-proxy中间件的服务器实例信息、以及实例运行情况。

运行实例标识由运行服务器的 IP 地址和 PID 构成。

运行实例标识均为临时节点,当实例上线时注册,下线时自动清理。 注册中心监控这些节点的变化来治理运行中实例对数据库的访问等。

由于注册中心会在后续的内容中讲,所以这里暂时不展开。

分布式治理总结

引入zookeeper这样一个角色,可以协助ShardingJDBC完成以下功能

- 配置集中化:越来越多的运行时实例,使得散落的配置难于管理,配置不同步导致的问题十分严重。将配置集中于配置中心,可以更加有效进行管理。

- 配置动态化:配置修改后的分发,是配置中心可以提供的另一个重要能力。它可支持数据源和规则的动态切换。

- 存放运行时的动态/临时状态数据,比如可用的 ShardingSphere 的实例,需要禁用或熔断的数据源等。

- 提供熔断数据库访问程序对数据库的访问和禁用从库的访问的编排治理能力。治理模块仍然有大量未完成的功能(比如流控等)。

到目前为止,ShardingSphere中Sharding-JDBC部分的内容就到这里结束了,另外一个组件Sharding-Proxy就没有展开了,因为它相当于实现了数据库层面的代理,也就是说,不需要开发者在应用程序中配置数据库分库分表的规则,而是直接把Sharding-Proxy当作数据库源连接,Sharding-Proxy相当于Mysql数据库的代理,当请求发送到Sharding-Proxy之后,在Sharding-Proxy上会配置相关的分片规则,然后根据分片规则进行相关处理。